Does ChatGPT Save Your Data?

Yes, ChatGPT saves your data. Read this article to learn more about how ChatGPT processes your data and ways to secure it.

Yes — ChatGPT saves, stores, and keeps your data. Every prompt, file upload, image, and conversation you send to ChatGPT is logged on OpenAI's servers and — depending on your plan and settings — may be retained for OpenAI's standard 30 days, used to train future models, or preserved indefinitely under court order regardless of any user setting.

Updated for 2026, this guide covers what data ChatGPT stores, where it is stored, what OpenAI does with it, the active legal preservation order from the New York Times v. OpenAI litigation, the ChatGPT Memory feature, the privacy carve-outs in ChatGPT Enterprise and Team, and — most importantly — how to keep sensitive data out of ChatGPT in the first place using browser-level data security.

ChatGPT collects and stores two main categories of data: information received automatically and information actively provided by users.

It’s important to note that ChatGPT retains conversation data for 30 days for monitoring purposes. This retention period can pose potential security risks, as stored data may become vulnerable to attacks. Users should be mindful about transmitting sensitive information during their interactions with ChatGPT.

For businesses concerned about data security, Strac’s ChatGPT DLP solution offers proactive safeguarding of sensitive information during ChatGPT interactions.

No, ChatGPT does not sell user data. OpenAI, the company behind ChatGPT, has explicitly stated in its privacy policy that it does not sell user data or share content with third parties for marketing purposes. This commitment to data privacy is an essential aspect of OpenAI’s business model and user trust.

However, it’s crucial to understand that while OpenAI doesn’t sell data, it does use the information collected for various purposes, including:

While these uses provide OpenAI with significant latitude in how it handles user data, the company maintains that it does not monetize this information through direct sales to third parties.

For organizations looking to enhance their data protection strategies, Strac’s SaaS DLP solution offers comprehensive protection across various cloud applications, including AI platforms like ChatGPT.

Chrome Extension DLP. Chat GPT DLP: Detect & Redact PII and Sensitive Data like PHI, PCI

ChatGPT uses the data it collects primarily to improve its AI model and enhance user experience. Here’s how ChatGPT utilizes user data:

It’s important to note that users can opt out of having their data used for training purposes through OpenAI’s privacy portal. For enterprise users, the default setting is that input will not be used for training.

For businesses concerned about data usage in AI platforms, Strac’s Data Security Posture Management (DSPM) solution can help monitor and manage data security across various cloud services, including AI platforms.

ChatGPT doesn’t train on documents stored in your company’s knowledge base. But if the knowledge base is connected, the model can read those documents during a conversation to generate answers.

That’s where the real risk comes from. If sensitive data lives in tools like Google Drive, Notion, Confluence, or Slack, it can be unintentionally exposed through prompts or responses.

Strac helps reduce that risk by:

In short: if your knowledge base connects to AI tools, it should be treated as part of your DLP and DSPM strategy.

ChatGPT, Codex, desktop AI apps, and coding agents are no longer isolated chat tools. They can now connect directly to your local environment, SaaS apps, cloud storage, codebases, and internal systems.

That means AI can access:

This boosts productivity, but also creates a major security risk.

Sensitive data like PII, PHI, PCI, secrets, payroll files, customer records, and source code can flow directly into the AI context window.

Traditional DLP often misses this because the traffic happens through connectors, APIs, local tools, or AI workflows; not standard email or browser uploads.

Strac protects these AI workflows by scanning content in real time before it reaches the model.

It can:

User asks ChatGPT to open a payroll file from SharePoint.

Strac scans the file first, removes SSNs or sensitive data, then only safe content reaches the model.

ChatGPT stores user data on OpenAI’s secure servers. These servers are located in the United States, as stated in OpenAI’s privacy policy. The company implements diverse security measures to protect user data:

Despite these measures, it’s important to remember that no system is entirely immune to data breaches. Users should exercise care when transmitting sensitive information through ChatGPT.

For organizations looking to enhance their data security across various platforms, including AI services, Strac’s Sensitive Data Discovery and Classification solution can help identify and protect sensitive information across multiple cloud environments.

ChatGPT collects and stores two types of data:

This category encompasses data that ChatGPT collects automatically during your interaction with the AI:

In addition to the data received automatically, ChatGPT also saves the data you actively provide. Here’s what it includes:

Users can manage their data privacy settings through data controls, allowing them to opt-out of data training and disable chat history to protect their data.

Recent findings show that 75% of cybersecurity professionals have noted a significant rise in cyber-attacks over the past year. Notably, 85% of these experts believe this escalation is primarily due to generative AI technologies like ChatGPT. Here are a few risks associated with ChatGPT storing your sensitive data.

Companies exploring how to stop ChatGPT from keeping your data typically want to prevent two things: model learning from prompts and long-term storage of sensitive information. While OpenAI provides user controls for turning off model training, this does not solve the enterprise-level problem. Data entered into ChatGPT can still remain in logs, be visible to administrators, or be accessible through API usage unless additional controls are in place. Organizations need layered safeguards that prevent sensitive information from entering the ChatGPT ecosystem at all.

To keep ChatGPT from retaining your data, organizations can follow these steps:

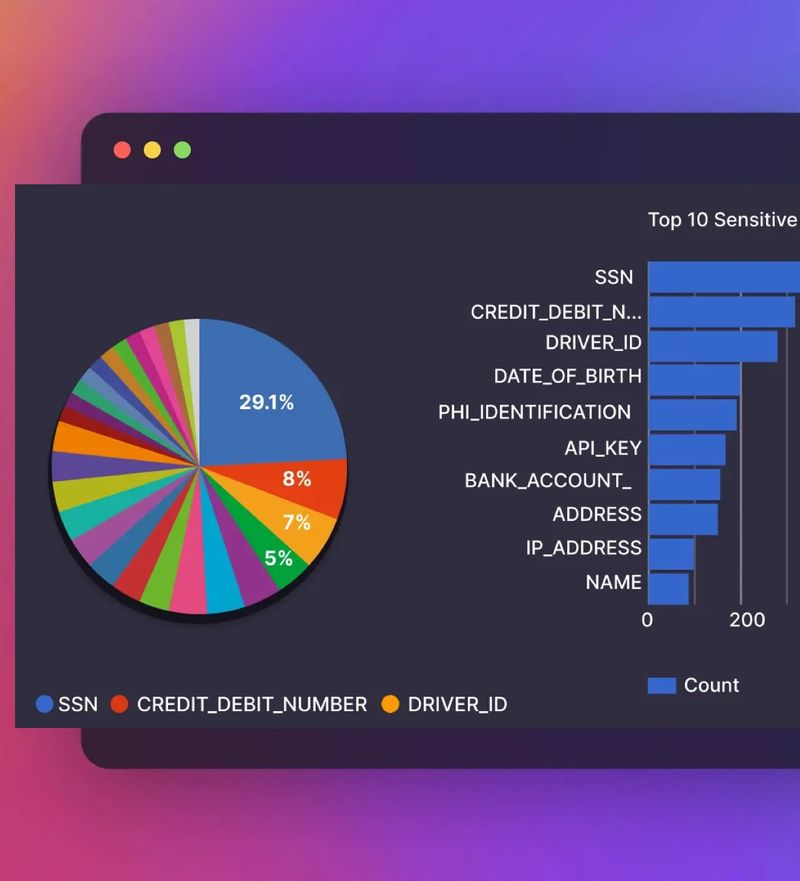

Strac provides additional protection for enterprises by enforcing continuous Data Discovery and Classification across Google Drive, Slack, Salesforce, Notion, and other tools that often feed into ChatGPT. Using ML and OCR, Strac detects sensitive data before it ever reaches an LLM. With real-time redaction, blocking, or masking, Strac prevents employees from unintentionally submitting PII, PHI, PCI, secrets, tokens, or confidential files into ChatGPT.

Bottom line: the best way to stop ChatGPT from keeping your data is to prevent sensitive information from entering it in the first place.

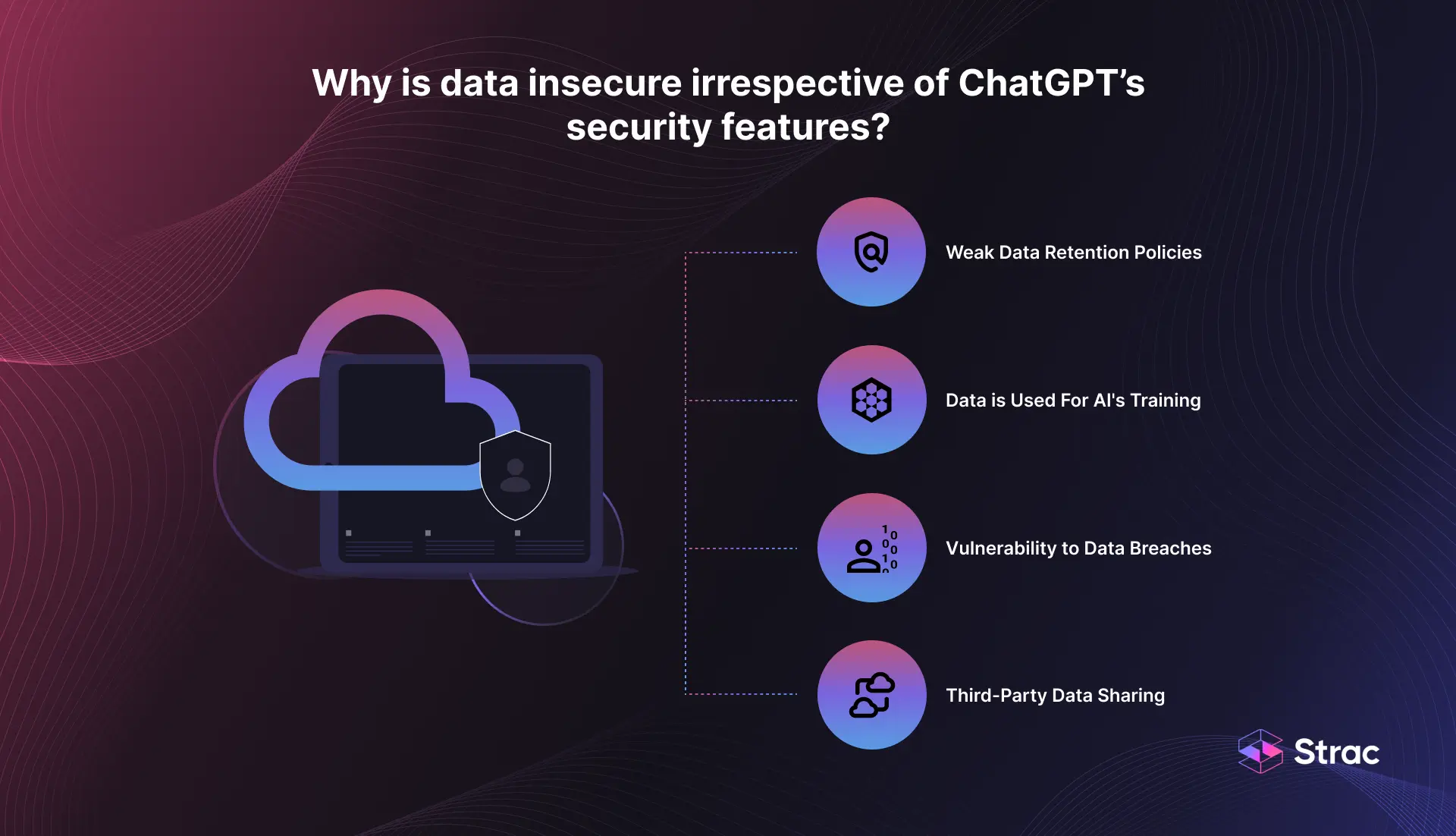

Despite OpenAI's robust security measures, such as end-to-end encryption, stringent access controls, and incentives for ethical hackers through a Bug Bounty program, data insecurity remains a pertinent issue. This is due to several inherent challenges in the way ChatGPT handles data.

OpenAI allows users to delete chat history, yet it retains new conversations for 30 days for monitoring purposes. This retention period poses a risk, as the stored data becomes vulnerable to attacks.

ChatGPT, a machine learning model, learns from the data it processes. While OpenAI asserts it doesn't use end-user data for model training by default, there's always a risk associated with accidentally or intentionally uploading sensitive data onto the platform.

No system is immune to data breaches, and ChatGPT, despite its security protocols, is not an exception to this risk. Instances of compromised ChatGPT account credentials circulating on the Dark Web underscore the potential for unauthorized access to the sensitive data that ChatGPT holds.

There are concerns about ChatGPT potentially sharing user data with third parties for business operations without explicit user consent. This possibility of data sharing with unspecified parties adds another layer of risk regarding user privacy and data security.

Concerns about whether ChatGPT has had a data leak continue to grow as enterprises evaluate AI adoption. ChatGPT Security Risks intensified in 2023 when OpenAI confirmed a bug that briefly exposed conversation histories to other users. Although it was not a breach of training data, it demonstrated how unpredictable LLM ecosystems can be when logs, sessions, and retrieval layers are not fully isolated. Since then, several security researchers have shown that prompt injection, training data extraction, and jailbreak attacks can reveal system-level information from LLMs.

Even when OpenAI secures its infrastructure, the most common source of data leaks is not the model itself but employees pasting sensitive data into prompts or connecting unsecured knowledge bases. This is why enterprises must treat LLMs as another SaaS surface that requires discovery, classification, and real-time policy enforcement.

Strac protects organizations from AI-related data leaks by:

With Strac, organizations can safely adopt generative AI while minimizing the risk of accidental exposure, unauthorized access, or leakage of sensitive information through LLM interactions.

Data Loss Prevention (DLP) tools protect sensitive data from vulnerabilities like cyber attacks, ransomware, data breaches, etc. DLP solutions often incorporate advanced machine learning algorithms to enforce data handling policies effectively. They allow you to maintain a robust security posture by blocking attempts to email sensitive materials, encrypting files from specific applications upon access requests, and implementing other preventive actions aligned with a company's policies.

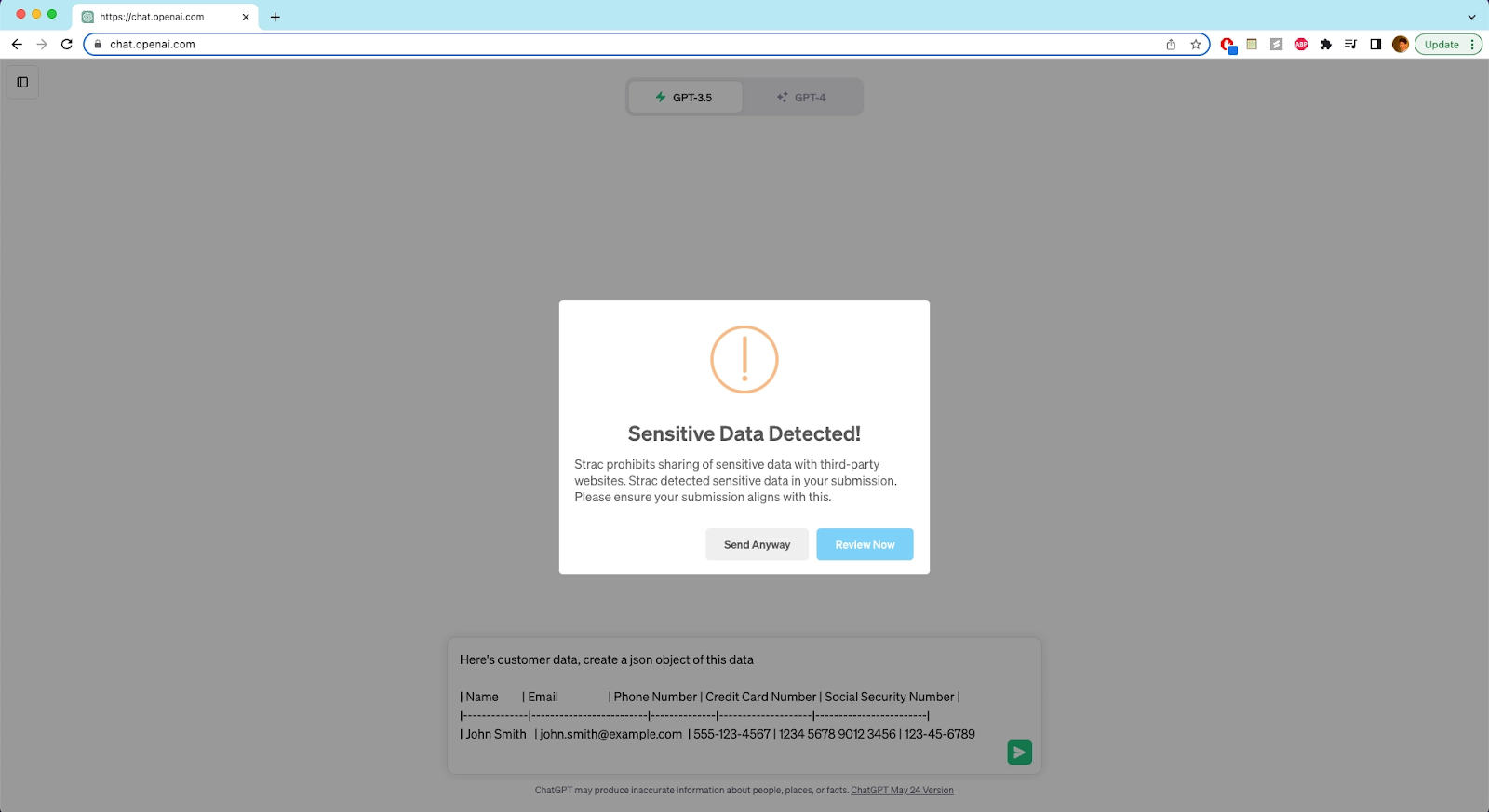

Strac's DLP solution offers a user-friendly browser extension (Chrome, Safari, Edge, Firefox) that proactively safeguards sensitive information in every ChatGPT interaction. Let's explore its features and the step-by-step implementation process.

Now, let's see how Strac's ChatGPT DLP functions:

The Strac Chrome extension isn't limited to ChatGPT; it seamlessly integrates with any website, showcasing its versatile application for data protection. Its wide-ranging utility is crucial for organizations handling sensitive data, providing a comprehensive approach to data security.

ChatGPT Security Risks are not only about whether the model saves data. The real exposure begins long before a prompt is submitted and often lives inside the SaaS systems, files, messages, and knowledge bases employees rely on every day. Even with OpenAI’s retention controls, enterprises remain responsible for preventing sensitive information from entering ChatGPT in the first place. That requires continuous visibility, accurate classification, and automated policy enforcement across all connected applications and data sources.

Strac provides the strongest defense against ChatGPT Security Risks by discovering sensitive data across SaaS, Cloud, GenAI, and endpoints, classifying it with ML and OCR, and enforcing real-time redaction, masking, or blocking before it reaches an LLM. With unified DSPM and DLP from Strac, organizations can embrace generative AI confidently while maintaining full compliance and complete control over their data.

Yes. ChatGPT stores every conversation, file upload, and image you send. The default retention is 30 days for Free, Plus, and Pro accounts, but conversations may be retained longer for safety review or to train future models. As of May 2025, a court order in NYT v. OpenAI requires OpenAI to preserve consumer ChatGPT logs indefinitely until the litigation concludes — including chats users have deleted. ChatGPT Enterprise and Team accounts are excluded from this preservation order.

ChatGPT data is stored in OpenAI's cloud infrastructure, primarily on Microsoft Azure. ChatGPT Enterprise and Team customers can request EU data residency; Free, Plus, and Pro consumer accounts are served from US-region infrastructure. Data is encrypted at rest with AES-256 and in transit with TLS 1.2+, but OpenAI staff and integrated services can access plaintext during normal processing.

Even with chat history disabled, OpenAI retains your conversations for up to 30 days for safety review before deletion — and as of May 2025, the court preservation order in NYT v. OpenAI overrides the standard deletion schedule for consumer accounts. Disabling chat history prevents OpenAI from using your conversations to train new models, but it does not prevent storage during the preservation window.

ChatGPT Security Risks are often tied to uncertainty about how data is handled under GDPR, CCPA, HIPAA, and other global privacy regulations. These laws require transparency, purpose limitation, and clear controls over how personal data is processed. OpenAI provides certain protections, but enterprises must understand that compliance depends on the way they submit, store, and manage sensitive information when using ChatGPT. User prompts may still fall under regulated data categories if they contain names, IDs, health information, financial information, or internal company data.

To stay compliant, organizations should follow these practices:

With Strac, companies can automatically enforce these controls across Google Drive, Slack, Salesforce, Notion, and other sources that commonly feed into ChatGPT usage.

Enterprises face significant ChatGPT Security Risks when employees paste sensitive information into prompts or upload documents without governance. Safe usage requires a structured approach that prevents accidental exposure while enabling productivity. The goal is not to block ChatGPT but to ensure every interaction follows the same security standards already applied to SaaS systems.

Best practices include:

With these safeguards in place, businesses can use ChatGPT confidently without compromising compliance.

Many organizations ask whether their data is stored permanently because ChatGPT Security Risks often relate to retention and visibility. OpenAI does retain some interaction data for a period unless you opt out of training and logging settings, but this does not mean your data is permanently stored. Logs may exist for security, abuse prevention, and auditing, and data uploaded into knowledge bases can remain accessible to the model during retrieval.

Retention depends on several factors:

Strac allows organizations to prevent sensitive data from ever entering ChatGPT, removing the risk of long-term retention concerns.

ChatGPT Security Risks include the possibility that data may be shared with service providers or subprocessors for operational purposes such as hosting, abuse detection, or security monitoring. OpenAI states that it does not sell data; however, data may still be accessible to authorized vendors depending on the infrastructure involved. This is standard across most cloud platforms, but it requires proper due diligence.

To ensure safety, businesses should:

With the right safeguards, the risk of unintended third-party exposure can be significantly reduced.

Organizations often want to know whether ChatGPT can delete data on request because retention and right-to-erasure are core requirements of GDPR and other privacy laws. OpenAI allows individuals and enterprise customers to request deletion of stored data depending on the plan and the data category. However, deletion applies only to stored interaction data and not to content sent from connected SaaS systems or knowledge bases.

This is why enterprises need additional controls:

By preventing sensitive data from entering ChatGPT in the first place, Strac ensures deletion requests are far simpler and more compliant with global privacy standards.

.avif)

.avif)

.avif)

.avif)

.avif)

.gif)