Generative AI DLP in 2026: Browser, Endpoint, SaaS & MCP Data Loss Prevention

Generative AI DLP prevents sensitive data leaks across ChatGPT, Claude, Gemini, Copilot, MCP servers, and agents. Framework, implementation, and evaluation guide for 2026.

Generative AI is already inside everyday enterprise workflows. ChatGPT, copilots, and internal models now sit directly in systems that handle customer data, source code, financial records, and internal context. Once data enters an AI workflow, traditional DLP loses visibility and control.

This guide is the deep-dive companion to Strac's Generative AI DLP product page. If you are evaluating Gen AI DLP vendors, start there for the capability matrix and demo; come back here for the framework, architecture, and how-to-implement detail.

AI breaks the assumptions legacy DLP was built on:

If your DLP only understands files, emails, or endpoints, it cannot secure AI usage.

AI DLP exists because AI is a first-class data security surface, not an edge case.

AI DLP is best understood as data loss prevention purpose-built for LLM-driven workflows, not a simple extension of legacy controls. At its core, AI DLP governs how data moves into and out of generative AI systems by inspecting prompts, chat messages, uploads, and model-generated responses in real time. Unlike traditional approaches that focus on files or network traffic, what AI DLP is really about is controlling language, context, and intent; the fundamental units of data movement in large language models.

This distinction is critical. In LLM DLP and generative AI DLP, sensitive information does not only exist as static records or attachments. It appears inside free-form text, conversational context, embeddings, and AI outputs that may summarize or transform the original data. Effective prompt DLP must therefore analyze both structured and unstructured data inline and apply enforcement before information ever reaches the model or leaves it.

The definition of AI DLP must be operational, not theoretical. If a solution cannot see prompts in real time and cannot enforce controls before data is submitted to or returned from an LLM, it is not AI DLP in practice; it is simply legacy DLP watching from the sidelines.

Traditional DLP was built for fixed boundaries; email, files, endpoints, known network paths. AI workflows do not use those boundaries.

Most AI usage happens in the browser. Users copy text, paste context, upload snippets, and interact with models using free-form language. None of this reliably passes through legacy DLP control points.

This is why traditional DLP vs AI DLP is no longer theoretical. It fails in production.

Where traditional DLP breaks:

Spicy take; when DLP gets noisy, enterprises don’t tune it. They bypass it.

The table below summarizes why traditional DLP cannot cover Generative AI workflows, and what a Generative AI DLP control plane has to do differently.

AI DLP is no longer forward-looking. Employees use AI every day for legitimate work; writing emails, debugging code, summarizing tickets, analyzing customer data. Most AI data leakage is accidental and happens fast.

Where risk shows up in practice:

If data protection cannot operate inline with AI interactions, it cannot reduce AI risk.

That is why AI DLP exists.

Anything else is visibility without control.

AI DLP only works if it reflects how data actually leaks in production GenAI workflows. Most organizations underestimate exposure because they think in terms of prompts alone. In reality, AI creates multiple parallel data leak paths.

If you cannot map where leakage happens, you cannot enforce controls where it matters. Discovery without inline enforcement does not reduce risk.

Where AI data leakage happens in practice:

Spicy take; most AI DLP failures happen after “prompt coverage” is declared complete.

AI DLP must enforce controls across inputs, uploads, integrations, and outputs in one system.

If remediation does not happen inline, AI DLP becomes observation, not protection.

Regulators do not distinguish between data leaked by email or by AI prompt. If sensitive data enters a model without controls, it is a compliance failure.

What auditors expect to see:

Shadow AI makes this unavoidable. Teams adopt new AI tools faster than governance can approve them. AI DLP focuses on controlling data inside AI tools, not pretending AI can be blocked entirely.

The browser is where 80% of generative AI data leaks happen. An employee opens a new ChatGPT tab, pastes a customer support ticket containing names, emails, and credit card numbers, and hits enter. The data is gone — into the model's context, often into training data, and almost always out of your compliance perimeter. Traditional DLP doesn't see this because the data never touches an email gateway, an endpoint file, or a SaaS API. It moves directly from clipboard to LLM.

👉 Download Strac Browser DLP Chrome Extention

What AI DLP must enforce in the browser:

▎ The Samsung lesson: In 2023, Samsung engineers pasted proprietary source code into ChatGPT three times in 20 days, leaking confidential semiconductor IP into a public model. Browser DLP would have caught all three incidents at the paste boundary. Samsung's response was to ban ChatGPT entirely — exactly the "Doctor No" outcome that kills AI productivity. Browser DLP lets you say yes to AI without saying yes to data leaks.

Learn more: https://strac.io/integration/chatgpt-dlp

Browser DLP is necessary but not sufficient. The fastest-growing class of AI tools is desktop-native: ChatGPT desktop, Claude desktop, Cursor, Windsurf, GitHub Copilot, Ollama, LM Studio. Browser extensions can't see any of them. An employee can drag a confidential PDF straight into Claude desktop and your browser DLP has zero visibility.

This is where endpoint DLP for AI matters. A lightweight Mac and Windows agent watches the file system and clipboard at the OS level, intercepting sensitive data before it reaches any AI client — browser-based or native.

What AI DLP must enforce on the endpoint:

The endpoint surface is especially critical for engineering and data science teams. Cursor and Windsurf are now standard developer tools, and they routinely send entire codebases to Claude and GPT-4 as context. Endpoint DLP is the only way to enforce what code those tools are allowed to send.

Learn more: https://www.strac.io/integrations/endpoint-dlp

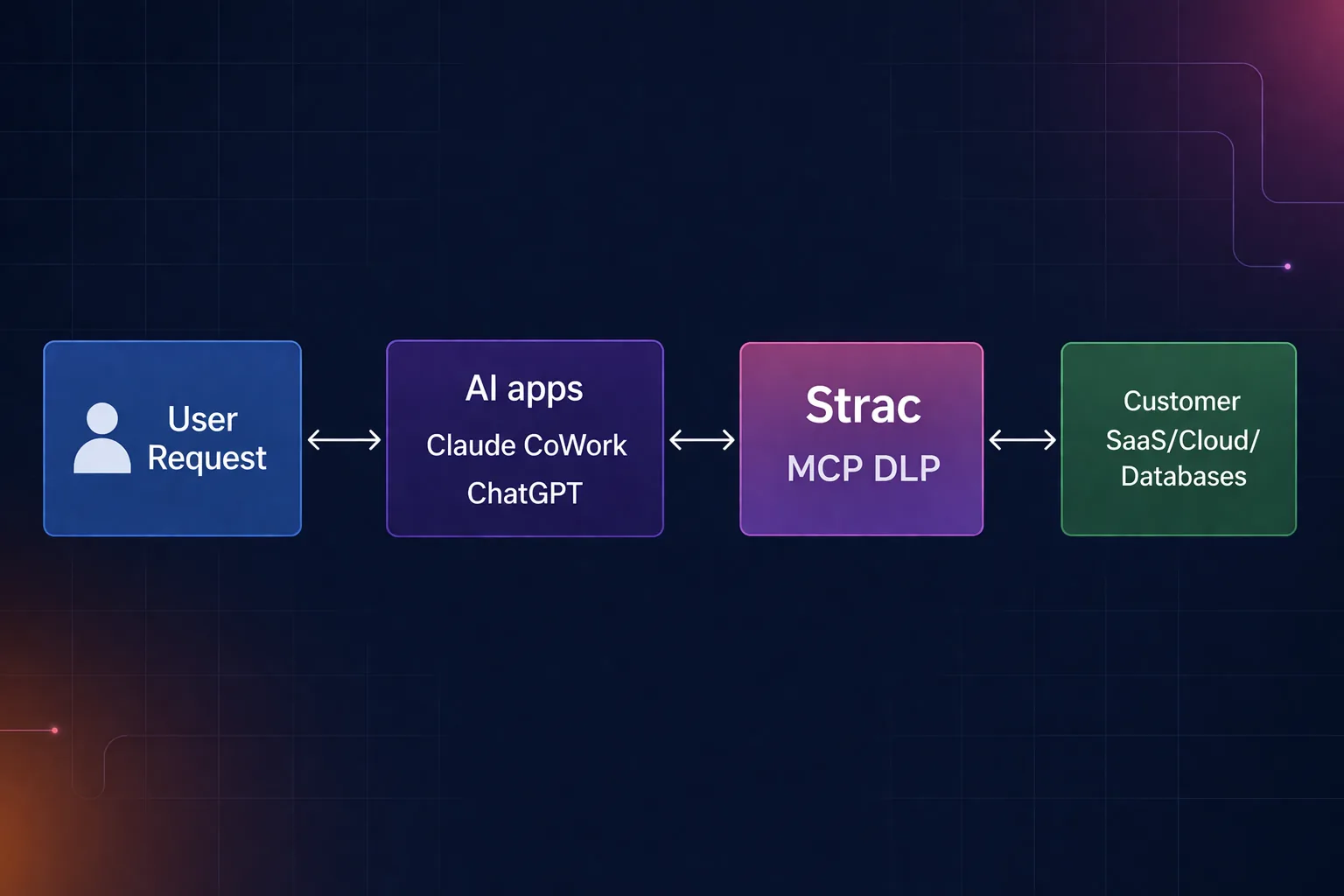

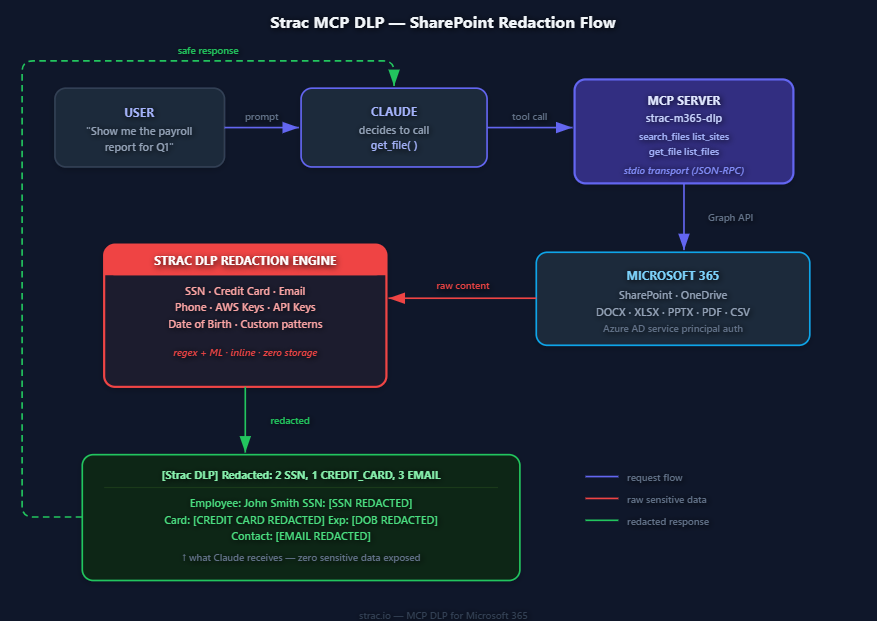

Model Context Protocol (MCP) is the newest — and most underestimated — AI data leak vector. MCP lets AI agents autonomously connect to your databases, source code repositories, file shares, and internal APIs. Once an agent has an MCP connection, it can read customer records, query Postgres, pull secrets from a config file, and write that data into a chat response — all without a human clicking anything. Legacy DLP, CASB, and proxy controls have zero visibility into this traffic because it's machine-to-machine inside a developer's own environment.

The risk is concrete: a Claude desktop user with an MCP server connected to a customer support database can ask "summarize the top 10 escalations this week" and have full PII flowing through the model context. A Cursor MCP integration with a production database can leak schema, secrets, and live customer rows into an AI prompt. Most security teams don't even know which MCP servers exist inside their org.

What AI DLP must enforce for MCP and AI agents:

▎ The MCP timing matters. MCP went from zero to standard in under twelve months. Most enterprise security teams haven't built any controls for it yet — and the agentic AI wave coming behind it (AutoGPT-style autonomous agents, multi-step tool use) will multiply the risk by an order of magnitude. The orgs that get MCP DLP in place in 2026 will be the ones that don't make headlines in 2027.

Learn more: https://strac.io/blog/mcp-dlp

AI DLP implementation works best when it is treated as a phased rollout plan, not a one-time policy project. The fastest way to fail with AI DLP is to start with aggressive blocking before you understand where sensitive data is actually flowing across ChatGPT, GenAI tools, and Gemini usage. The goal is to move from policy on paper to enforcement in the prompt path, with measurable coverage, reduced noise, and audit-ready logs that prove governance is real.

Done this way, AI DLP is implementable in phases and improves over time. You can start with visibility and warnings, then progress to targeted enforcement without derailing productivity.

AI DLP for ChatGPT must be grounded in how people actually use the tool day to day. Most risk does not come from exotic API integrations; it comes from simple copy and paste, iterative prompt refinement, and document uploads for summarization, debugging, or analysis. Effective AI DLP focuses on these high-frequency workflows and enforces controls directly in the prompt path, while producing interaction-level audits that compliance teams can rely on and security teams can tune over time.

Strac GhatGPT AI DLP solution

The foundation of AI DLP for ChatGPT is real-time prompt inspection. Every prompt and pasted text block should be analyzed for sensitive content before submission, including PII, PHI, PCI, credentials, secrets, source code, and internal documents. This detection must work on unstructured language, not just patterns, because prompts often mix natural language with data fragments. If prompt content cannot be inspected inline, ai dlp coverage collapses at the most critical control point.

Detection alone does not stop leakage. AI DLP must support inline remediation actions such as block, warn, or redact before sensitive data reaches ChatGPT. At the same time, every decision should be logged; who submitted what, which policy triggered, what action was taken, and when. These interaction audits are essential for investigations, compliance reviews, and proving that ai data loss prevention controls are enforced consistently.

AI DLP policies for ChatGPT should combine data categories with behavior patterns. Common examples include blocking prompts that contain raw PII or secrets, allowing masked email addresses, warning users when internal documents are pasted, and redacting sensitive fields while letting the rest of the prompt through. This flexibility is what keeps controls effective without breaking productivity.

The success metric for AI DLP in ChatGPT is simple and measurable. Sensitive data should be blocked or redacted before it ever reaches ChatGPT, not detected after the fact.

At the control layer, AI DLP for Gemini looks familiar. The same principles apply; inspect prompts, govern uploads, enforce inline, and log everything. What changes is how users share data. Gemini usage is deeply tied to Google ecosystem workflows, including Sheets, CSV exports, screenshots, and documents pulled from Drive. This means AI DLP must account for both conversational prompts and rich file-based inputs without relying solely on storage-level scanning.

Slack Redaction: Detect and Redacr sensitive messages and files

Gemini DLP must inspect prompt text and uploaded files in real time. Users frequently paste tables from Sheets, upload CSVs for analysis, or attach screenshots and PDFs. AI DLP needs to classify structured and unstructured content inline and apply policy before the data is processed by the model.

As with ChatGPT, Gemini controls should start with audit and warn modes to tune policies, then progress to blocking or redaction for high-risk data. These remediation modes must be configurable by data type, user group, and use case to avoid unnecessary friction.

Gemini interactions must generate the same level of evidence as any other regulated workflow. AI DLP should produce detailed logs and integrate with SIEM or SOAR systems so security teams can correlate AI events with broader incident response and compliance reporting.

The key differentiator to understand is this. Workspace-only DLP that scans files at rest is not enough for Gemini. Gemini requires prompt-path controls that operate inline, at the browser or interaction layer, because that is where sensitive data actually moves.

Evaluating AI DLP is not about feature depth; it is about whether the tool survives real daily AI usage. If it cannot enforce controls inline, stay accurate at scale, and fit into existing security operations, it will be ignored or turned off.

What an AI DLP solution must prove:

Spicy take; if an AI DLP tool can’t enforce inline on day one, it won’t be enforcing six months later either.

AI DLP buyers are no longer looking for isolated controls bolted onto individual chatbots. They need a single control plane that governs how sensitive data moves across ChatGPT, Gemini, Claude, and other GenAI tools, without fragmenting policy management or operational visibility. Just as importantly, AI DLP must move beyond alerting. In production environments, teams need real-time enforcement and remediation that stop leaks as they happen, while producing evidence that stands up in audits. This is where a unified, enforcement-first approach becomes essential.

Strac approaches AI DLP as an end-to-end control layer that operates inline across GenAI workflows, rather than as a collection of point solutions. The focus is on consistent policy enforcement, real-time inspection, and audit-ready outcomes across tools and teams.

The outcome is clear and measurable. AI DLP with Strac enables teams to adopt AI confidently, stops regulated data from leaking in real time, and produces the audit evidence security and compliance programs require; without slowing down how people actually work.

AI DLP is quickly becoming the minimum viable control set for any enterprise adopting GenAI at scale. Prompts, uploads, and AI-generated outputs are no longer edge cases; they are now mainstream data exfiltration paths woven into everyday work. Treating AI risk as an extension of legacy DLP leaves organizations exposed precisely where sensitive data moves fastest and with the least friction.

The winning approach to AI DLP is not policy-only. It is visibility plus inline enforcement plus auditability. Security teams must be able to see how AI tools are used, enforce controls in real time before data reaches ChatGPT or Gemini, and produce clear evidence that policies were applied consistently. Without all three, AI governance collapses under real-world pressure.

The test is simple. If an AI DLP solution cannot inspect prompts, uploads, and outputs and remediate risk before sensitive data is submitted or returned, it will not meaningfully reduce exposure; and it will not hold up in compliance or audit reviews.

AI DLP, or AI data loss prevention, is a set of controls designed to prevent sensitive data from being exposed through generative AI systems. It governs how data enters and exits AI workflows by inspecting prompts, contextual memory, and model outputs in real time. Unlike legacy approaches, AI DLP is built for unstructured, conversational data and enforces protection before information is consumed by or emitted from AI models.

The difference between traditional DLP vs AI DLP comes down to context, timing, and data structure. Traditional DLP focuses on static objects such as files, emails, and databases, using deterministic rules and regex patterns. AI DLP operates inline within AI interactions, evaluates semantic meaning and intent, and enforces controls in real time. This makes it effective in environments where data is dynamic, unstructured, and continuously transformed.

Yes, AI DLP can prevent data leaks in tools like ChatGPT and enterprise copilots when it is integrated inline with those workflows. Effective AI DLP can:

This approach prevents sensitive information from being exposed rather than simply detecting it after the fact.

AI DLP supports GDPR and HIPAA compliance by enforcing controls on how regulated data is handled inside AI workflows. While regulations do not mandate specific AI DLP tools, they require organizations to prevent unauthorized disclosure of personal and health data. AI DLP provides the visibility, enforcement, remediation, and audit evidence needed to demonstrate that sensitive data is protected when AI systems are used.

Deployment time depends on architecture and integration depth, but modern AI DLP platforms are designed for rapid rollout. Many SaaS-native AI DLP solutions can be deployed in days rather than months by integrating directly with existing SaaS and GenAI tools. Faster deployment is critical, as AI adoption often outpaces traditional security implementation timelines.

A gateway sits between the user and the model and inspects API traffic. That works for sanctioned, API-routed usage (a fine-tuned internal LLM, a vendor integration) but misses the dominant 2026 leak path: an employee pasting customer data into ChatGPT in their browser, or uploading a contract to Claude. Generative AI DLP has to operate at the data layer — the browser, the endpoint, the SaaS app, the email — not just at the network layer. A gateway is a useful complement, not a substitute.

They include partial controls — tenant isolation, training opt-outs, data residency commitments, and some prompt logging. None of them inspect or redact the regulated data inside the prompt before it leaves the user's device, and none cover the other AI tools the same employee will paste into ten minutes later. Enterprise tiers are necessary; they are not a Generative AI DLP program on their own.

It has to. MCP servers and AI agents act on data on behalf of users — reading files, calling APIs, writing back. A Generative AI DLP program in 2026 inventories MCP servers, scopes their permissions, logs every tool invocation with the data accessed, and decommissions them when employees leave. Generative AI DLP that stops at the chat box is already out of date.

If the policy is configured as block-everything, yes. The pattern that works is warn-then-redact: regulated data is silently redacted before it reaches the model, the prompt still goes through, the user gets a non-blocking notification. Employees keep working; the regulated data never leaves. Most enterprises see redactions on roughly 2–5% of prompts within the first month and outright blocks on under 0.5%.

Generative AI DLP produces evidence for SOC 2 CC6.6 (unauthorized data exposure) and CC6.7 (restricted transmission to external systems), HIPAA's minimum-necessary standard and accounting-of-disclosures, EU AI Act Article 10 (data governance and quality), and GDPR Articles 5, 25, and 35. The same control — an inspected, logged, redacted prompt — generates evidence for all four. See our AI Data Governance framework for the full mapping.

.avif)

.avif)

.avif)

.avif)

.avif)

.gif)