What Is AI Data Security? How Enterprises Secure AI Systems

AI data security explains how sensitive data actually flows through AI systems, where exposure happens, and how enterprises enforce control during prompts, inference, and outputs.

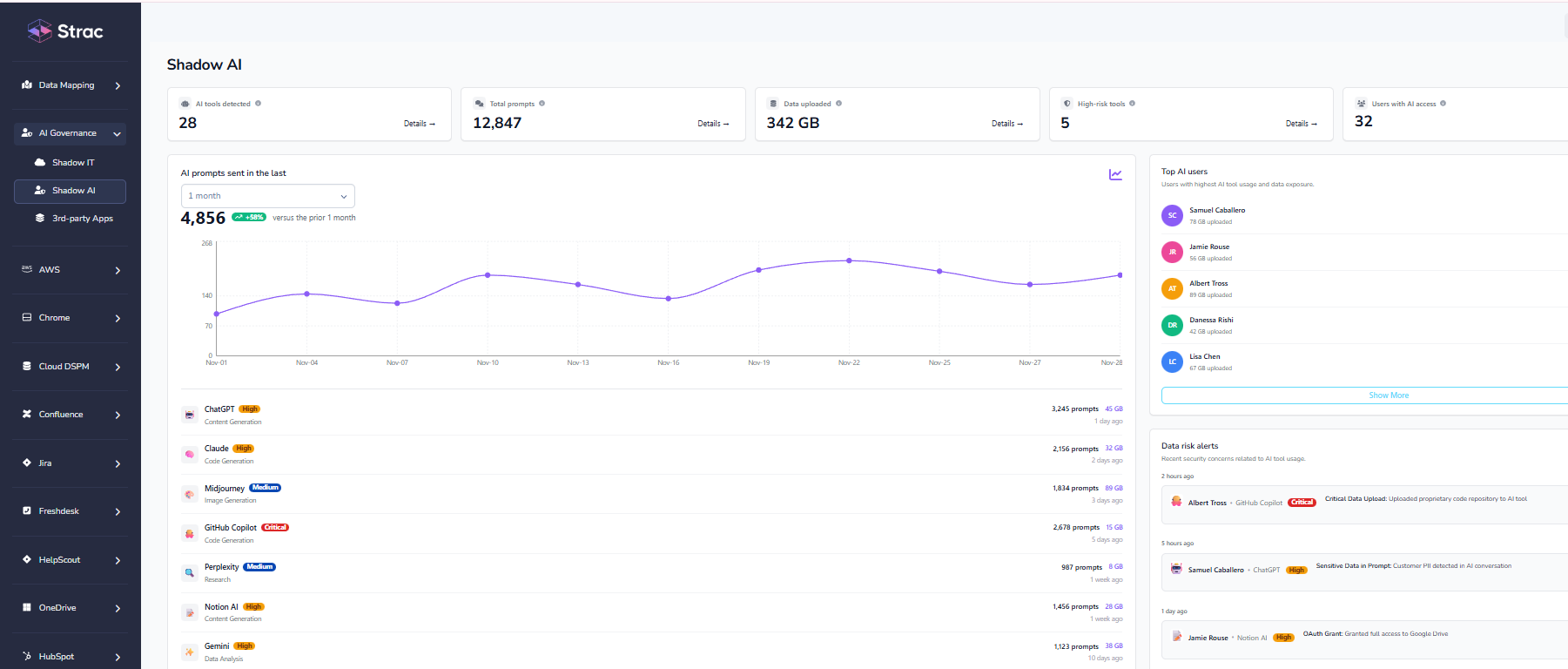

AI data security is a blind spot that poses high risk for businesses, because most security programs were never designed for systems that continuously consume, transform, and regenerate data at runtime.

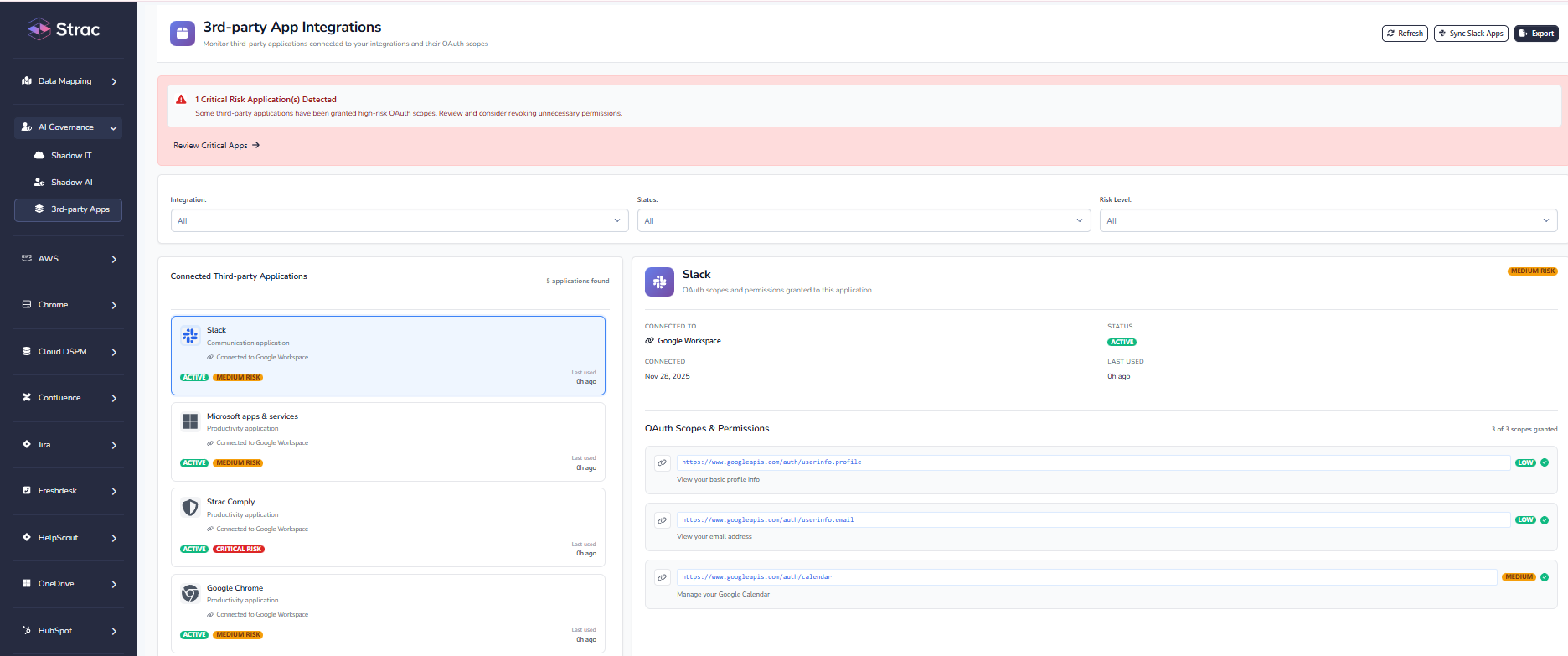

As soon as large language models, copilots, or AI agents are connected to SaaS tools, cloud storage, data warehouses, or internal APIs, sensitive data stops behaving like a file. It becomes part of a continuous workflow. That shift is where most traditional security models quietly break.

This guide explains where AI data exposure actually happens today; why legacy approaches fall short in real environments; and what enterprise teams need to secure AI systems at scale

AI data security is no longer theoretical. It is an operational requirement for enterprises deploying AI across SaaS, cloud, and internal workflows.

The core challenge is not model safety; it is data behavior. AI systems continuously ingest, transform, and regenerate information in ways traditional controls were never designed to observe or enforce.

AI data security refers to the controls that protect sensitive information as it flows through AI systems. This includes data used for training, fine-tuning, inference, prompt input, retrieval pipelines, and generated outputs.

Unlike traditional applications, AI systems do not simply store or transmit data. They actively reshape it. Sensitive information may exist only briefly inside a prompt or context window; yet still reappear downstream in responses, logs, tickets, or automated actions.

In practice, AI data security ensures:

Traditional security methods were designed for static environments. AI environments are dynamic by default.

Most legacy approaches assume:

AI breaks these assumptions. Data is transient. It is processed in memory. It moves across systems faster than audits or alerts can keep up.

Common failure points include:

According to IBM’s annual data breach research, breach costs increase as environments grow more complex and detection slows. AI accelerates both trends by expanding the blast radius of a single prompt or integration.

Effective AI data security is built from multiple layers that operate together across the AI lifecycle. Each layer addresses a different failure mode introduced by AI systems.

Enterprises must first understand what sensitive data exists before connecting it to AI workflows. Discovery must span:

Key requirement: continuous discovery. Periodic scans fail in SaaS and AI-driven environments where data changes daily.

AI data governance defines which data types are allowed in specific AI use cases, models, and user groups. Governance translates legal, regulatory, and business intent into enforceable policy.

Read more about governance in AI environments.

Modern AI data security must go beyond detection.

Enterprise-grade enforcement includes:

Without runtime enforcement, discovery and governance remain theoretical.

AI usage evolves rapidly. New prompts, models, integrations, and workflows continuously reshape exposure. Security controls must adapt in real time rather than relying on static policies.

AI introduces new data risks; but it also enables stronger security controls when applied intentionally.

Security teams increasingly rely on AI-powered classification to identify sensitive data across everyday workflows, including:

AI is also embedded directly into enforcement workflows. AI-aware DLP inspects prompts and responses in real time, preventing sensitive data from being shared with external models or exposed in outputs.

In practice, this means inspecting prompts and responses as they happen and applying policy before sensitive data leaves the organization. For example, controlling what employees paste into tools like ChatGPT and what those tools return prevents accidental leakage long before alerts or audits would surface it.

When discovery, runtime enforcement, and continuous monitoring operate together across AI workflows, security teams gain posture-level visibility into AI risk. At that point, they know what data AI can access, how it is being used, and where enforcement is applied in real time; which is what AI security posture management looks like in production environments.

Keeping data safe in an AI-driven world requires a shift from perimeter-based security to workflow-based enforcement.

In practice, this means:

Enterprises that adopt AI-aware governance and enforcement early gain control as AI usage scales. Those that delay often find themselves reacting to exposure after it has already propagated across systems.

AI data security matters because AI systems are now embedded in everyday work. Employees paste data into prompts. Models pull context from internal systems. Outputs get copied into tickets, documents, and downstream workflows. Once that starts happening at scale, small mistakes turn into systemic risk.

The problem is not that teams lack policies or intent. It is that traditional security controls were never designed for systems that think in context, operate in memory, and generate new content on the fly. Scanning files or reviewing alerts after the fact does not stop sensitive data from moving through AI systems.

Real AI data security comes down to a few practical truths. In real environments, teams that stay in control do a small number of things consistently well:

Teams that approach AI data security this way gain confidence as AI adoption grows. Teams that do not usually discover the gaps only after data has already escaped. At this point, AI data security is not about preparing for the future; it is about staying in control of how work actually gets done today.

No. Traditional DLP focuses on files, emails, and known data repositories. AI data security goes further, covering sensitive data inside prompts, context windows, embeddings, retrieval pipelines, and AI-generated outputs. AI systems reshape data dynamically, which legacy DLP was never designed to control.

No. Access controls define who can use an AI system, not what data the system processes once access is granted. AI data security requires content-level controls that inspect, redact, or block sensitive data during inference and output generation.

Yes. DSPM for AI provides foundational visibility into where sensitive data lives and how it feeds AI systems. However, DSPM alone is not sufficient; it must be paired with runtime enforcement to prevent real-time AI data exposure.

No. Any organization using AI with customer data, internal documents, source code, or proprietary IP faces AI data exposure risk. AI data security applies to SaaS companies, enterprises, and startups alike, not just regulated sectors.

Continuously. AI usage patterns, models, integrations, and prompts change far faster than traditional applications. AI data security controls must evolve in real time to remain effective as AI systems and workflows expand.

.avif)

.avif)

.avif)

.avif)

.avif)

.gif)