The Invisible Data Leak From Shadow AI and GenAI Tools

Shadow AI and GenAI tools can expose sensitive data. Learn how Strac detects Shadow AI, monitors prompts, and prevents AI data leaks in real time.

Your organization probably didn’t experience a data breach today.

But sensitive data may still have left your environment.

An employee copies a customer list from Salesforce.

They paste it into ChatGPT to summarize the accounts.

A developer uploads a configuration file to an AI assistant to debug an issue.

A marketer pastes internal campaign data into an AI writing tool.

No malware.

No suspicious downloads.

No traditional security alerts.

Just normal employees using AI tools.

This is the new reality of Shadow AI.

Employees are adopting AI tools faster than security teams can track them, and many of those tools connect directly to internal systems and data.

Which means sensitive information can leave the organization in ways most security stacks never see.

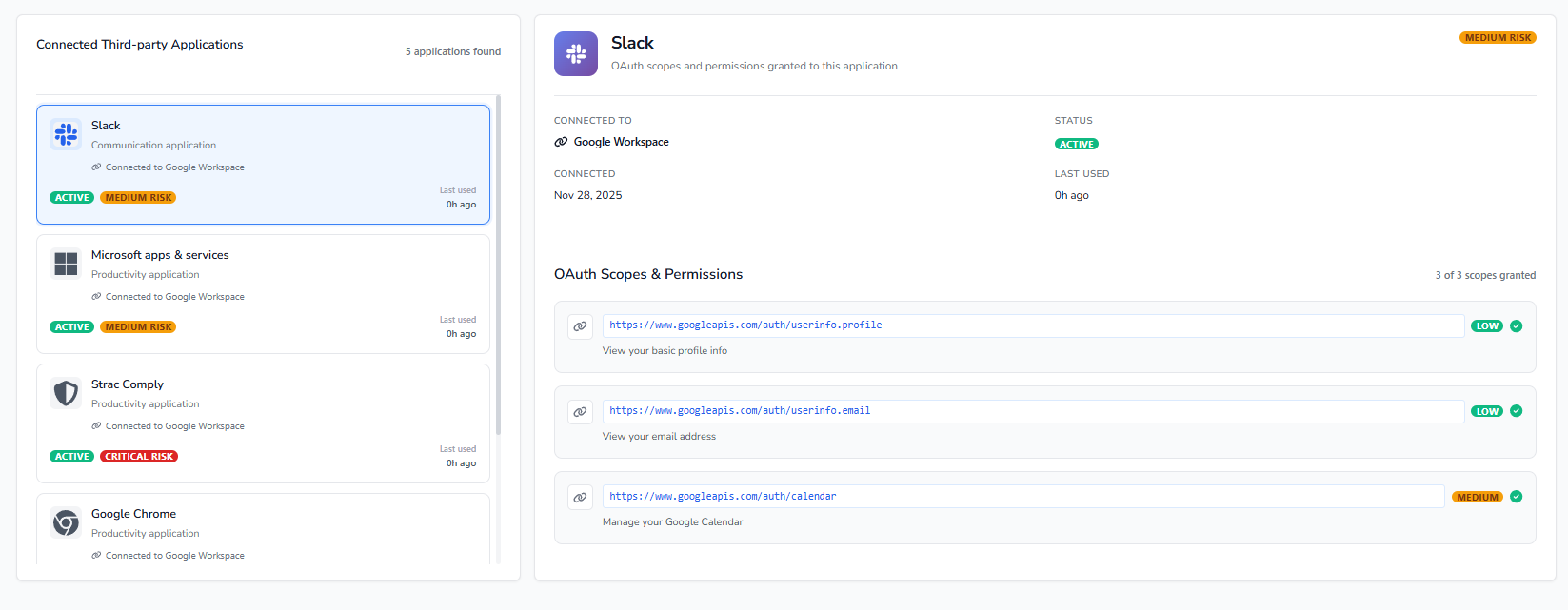

Many modern AI tools connect to the systems your organization uses every day.

These tools often integrate with:

Once connected, an AI tool can retrieve and synthesize information across multiple systems instantly.

.png)

A single prompt like:

“Summarize our top enterprise customers and their recent support issues.”

could pull information from:

The response looks like a simple AI-generated paragraph.

But inside that paragraph may be customer lists, pricing data, internal strategies, or proprietary code.

And once that response is generated, it can easily be copied or shared outside the organization.

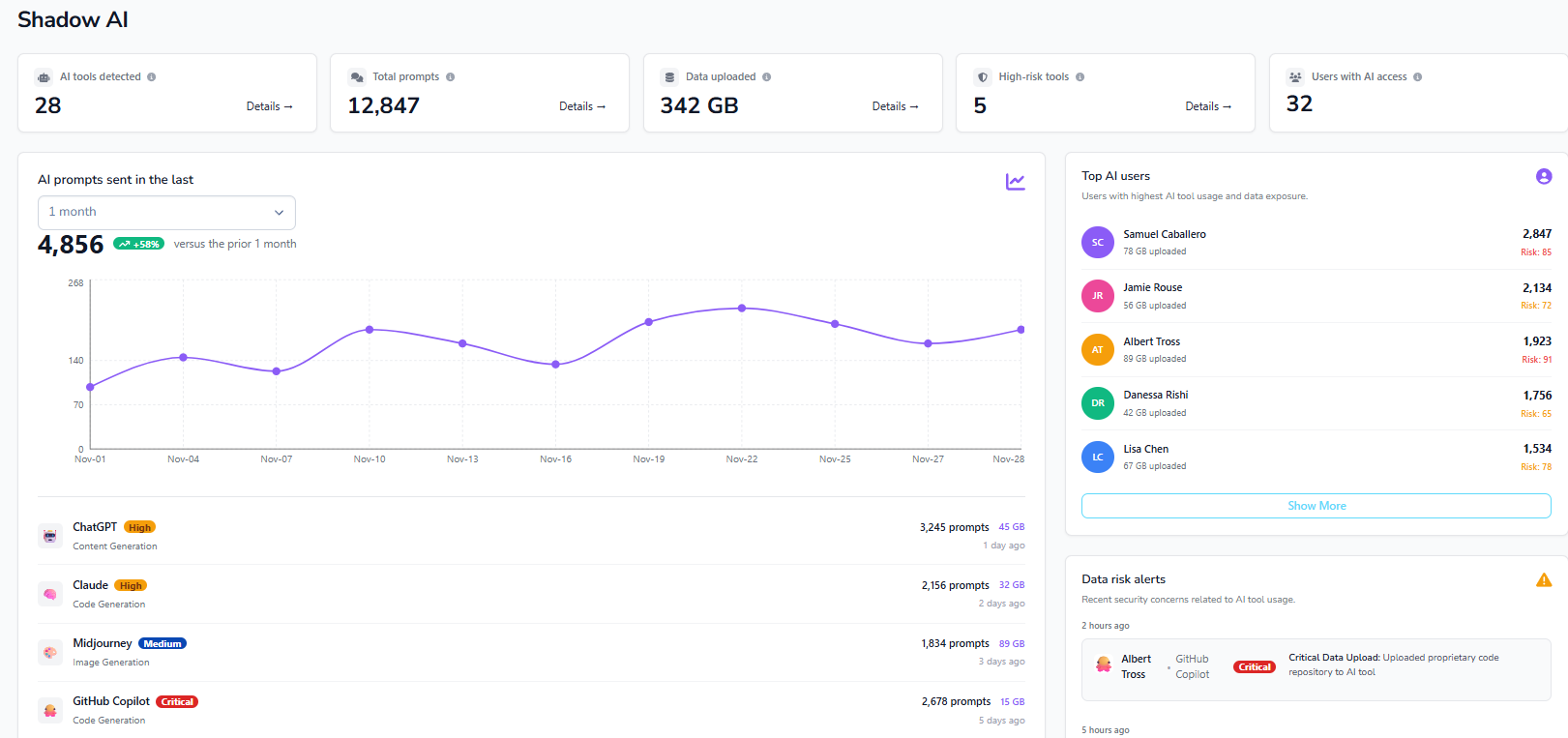

Most organizations don’t realize how many AI tools employees are already using.

Employees experiment with:

Many of these tools connect to company data through OAuth permissions or SaaS integrations.

Over time, organizations may accumulate dozens of unmanaged AI apps.

These tools often have access to:

Security teams may not even know these connections exist.

This is the Shadow AI problem.

Most traditional data loss prevention systems were designed to monitor:

AI usage doesn’t behave like that.

Sensitive data is often shared through:

From a traditional security perspective, this activity looks like normal user behavior.

No files are transferred.

No unusual network activity appears.

But sensitive data has still been exposed.

AI adoption isn’t slowing down. The challenge is enabling employees to use AI tools safely without exposing sensitive data.

Strac helps security teams gain visibility and control over Shadow AI and GenAI usage across the organization.

The goal isn’t to stop AI adoption.

It’s to enable AI safely while keeping control of sensitive data.

AI tools are becoming part of everyday work.

But without visibility and governance, Shadow AI can quietly expose sensitive company data.

Security teams need to understand:

Organizations that can combine Shadow AI discovery, GenAI DLP, and AI governance will be able to adopt AI safely without losing control of their data.

Shadow AI refers to AI tools employees use without approval from IT or security teams. These tools may connect to internal systems or receive sensitive data through prompts.

Employees may paste confidential information such as customer data, internal documents, or source code into AI tools that send this information outside the company environment.

GenAI DLP protects sensitive data during interactions with AI tools by detecting and blocking risky prompts or file uploads before they reach external models.

Organizations need visibility into AI usage, monitoring of AI prompts, and controls that prevent sensitive data from leaving the environment while still allowing employees to use AI tools productively.

.avif)

.avif)

.avif)

.avif)

.avif)

.gif)